Versioning your software helps to give it a history and a sense of progression. Having that automatically taken care of for you lift a rather tedious burden off your shoulders.

I’ve previously written about my collected notes on modern .NET versioning practices. Today, I’d like to showcase how I put all of it into effect at Bad Echo, resulting in a nicely version-automated working environment.

Quick Rehash on Versioning Concepts

We intend to put semantic versioning concepts into practice with our software. As mentioned in a previous article, two versioning formats shall be employed: a stable and a developmental release format.

Stable Release Version Format

Major.Minor.PatchThe major, minor, and patch version numbers are simple numeric identifiers.

As per the semantic versioning principles, the incrementation of a particular version number is dictated by the types of changes introduced into the new version.

- Major: backward incompatible changes

- Minor: backward compatible, new features

- Patch: backward compatible bugfixes

Prerelease Version Format

Major.Minor.Patch-PrereleaseId.Build[+Metadata]The prerelease identifier denotes the “degree” of stability and will typically be akin to alpha, beta, etc.

The build number is a counter incremented each time we generate a new build under a particular prerelease identifier.

The build metadata is the short hash for the commit responsible for the build, and it allows us to quickly pull up a snapshot of the code as it was at the time of the build if we need to.

The build number and build metadata do not appear in stable release packages for several reasons, the chief among them being that NuGet and GitHub disapprove.

Disapproving powerful entities notwithstanding, having more than three numeric identifiers is too busy of a version for a public-facing package.

That’s your quick rehash complete! Let’s now see how we make the above desired version formats a reality in our GitHub repos.

Build Customization Settings

For our versioning system to hook into our build process and control the version that gets baked into our assemblies, we need to provide it with some points of entry via custom MSBuild properties.

The best way to do this is to author a Directory.Build.props file containing common version-related properties and place it at the root of each repo whose software we want versioned.

Directory.Build.props

<Project> <!--Versioning.--> <PropertyGroup> <MajorVersion>0</MajorVersion> <MinorVersion>1</MinorVersion> <PatchVersion>0</PatchVersion> <VersionPrefix>$(MajorVersion).$(MinorVersion).$(PatchVersion)</VersionPrefix> <AssemblyVersion>$(MajorVersion).0.0.0</AssemblyVersion> </PropertyGroup> <Choose> <When Condition=" '$(BuildMetadata)' != '' AND '$(PrereleaseId)' != '' AND '$(BuildNumber)' != ''"> <PropertyGroup> <VersionSuffix>$(PrereleaseId).$(BuildNumber)</VersionSuffix> <InformationalVersion>$(VersionPrefix)-$(VersionSuffix)+$(BuildMetadata)</InformationalVersion> <FileVersion>$(MajorVersion).$(MinorVersion).$(PatchVersion).$(BuildNumber)</FileVersion> </PropertyGroup> </When> <Otherwise> <PropertyGroup> <FileVersion>$(MajorVersion).$(MinorVersion).$(PatchVersion).0</FileVersion> </PropertyGroup> </Otherwise> </Choose> </Project>

The values set for each element here are immaterial, as values established by our build scripts will override them; what matters here is that the properties are defined.

With this build configuration file in place, all code projects found under the root directory of our repo will automatically inherit these version properties and allow our process to version them.

Version File

We now need to create a record that acts as the source of truth for software version information in our repo. A simple JSON file with the name version.json, placed in the root directory of our repo, serves this purpose.

version.json

{

"majorVersion": 1,

"minorVersion": 0,

"patchVersion": 12,

"prereleaseId": "alpha"

}

The version file is the source for all current versioning information and is referenced during compilation. All software projects in the repo will reflect this versioning information; if there is a need for a project to have a different version, consider placing it in its own repository.

Every time we change one of the version numbers in this file, the build number will reset back to 0. We’ll explore how this happens in the next section covering our build submodule, which contains all common build-related scripts and assets.

Build Submodule

All Bad Echo software uses the same scripts to handle the building, versioning, and deployment of compiled assets. To avoid redundant code, I created a single repo to hold all build assets, which other repos can reference as a submodule.

You can view the Bad Echo common build repository here. Other repos reference it by adding it as a submodule via running the following command:

git submodule add https://github.com/BadEcho/build.gitThis will create a build folder in the root directory of the repo. The build workflows we’ll examine later will expect the build assets to be present at this location.

The two assets we care most about in our build submodule are the build script and the push script.

Build Script

Our workflow uses the build script to compile, test, and package our software assets. It accepts several parameters:

- Commit ID (optional)

- Version distance (only required if commit ID provided)

- Value indicating if tests should be skipped

The commit ID is the short hash of the commit responsible for the build, and it is the value we use as the build metadata. We only provide it when we’re creating a prerelease build.

The version distance is the number of commits made since we last updated our version.json file. It’s used as the build number and only provided when creating a prerelease build.

build.ps1

# Builds the Bad Echo solution.

param (

[string]$CommitId,

[string]$VersionDistance,

[switch]$SkipTests

)

function Execute([scriptblock]$command) {

& $command

if ($lastexitcode -ne 0) {

throw ("Build command errored with exit code: " + $lastexitcode)

}

}

function AppendCommand([string]$command, [string]$commandSuffix){

return [ScriptBlock]::Create($command + $commandSuffix)

}

New-Item -ItemType Directory -Force .\artifacts

$artifacts = Resolve-Path .\artifacts\ | select -ExpandProperty Path

if (Test-Path $artifacts) {

Remove-Item $artifacts -Force -Recurse

}

$versionSettings = Get-Content version.json | ConvertFrom-Json

$majorVersion = $versionSettings[0].majorVersion

$minorVersion = $versionSettings[0].minorVersion

$patchVersion = $versionSettings[0].patchVersion

$buildCommand = { & dotnet build -c Release `

-p:MajorVersion=$majorVersion -p:MinorVersion=$minorVersion `

-p:PatchVersion=$patchVersion }

$packCommand = { & dotnet pack -c Release --no-build `

-p:PackageOutputPath=$artifacts -p:MajorVersion=$majorVersion `

-p:MinorVersion=$minorVersion -p:PatchVersion=$patchVersion }

if($CommitId -and $VersionDistance) {

$prereleaseId = $versionSettings[0].prereleaseId

$versionCommand

= "-p:BuildMetadata=$CommitId -p:PrereleaseId=$prereleaseId -p:BuildNumber=$VersionDistance"

$buildCommand = AppendCommand($buildCommand.ToString(), $versionCommand)

$packCommand = AppendCommand($packCommand.ToString(), $versionCommand)

}

Execute { & dotnet clean -c Release }

Execute $buildCommand

if ($SkipTests -ne $true) {

Execute { & dotnet test -c Release --results-directory $artifacts --no-build -l trx `

--verbosity=normal }

}

Execute $packCommand

The result of running this script will be .nupkg files for each software project, properly versioned at both the assembly and package levels.

Push Script

The next script we have is the push script, which is used by our workflow to deploy our assembled packages to the desired package repository.

push.ps1

# Pushes Bad Echo packages to a package repository.

$scriptName = $MyInvocation.MyCommand.Name

$artifacts = ".\artifacts"

if ([string]::IsNullOrEmpty($Env:PKG_API_KEY)) {

Write-Host "${scriptName}: PKG_API_KEY has not been set; no packages will be pushed."

}

elseif ([string]::IsNullOrEmpty($Env:PKG_URL)) {

Write-Host "${scriptName}: PKG_URL has not been set; no packages will be pushed."

}

else {

Get-ChildItem $artifacts -Filter "*.nupkg" | ForEach-Object {

Write-Host "$($scriptName): Pushing $($_.Name) to repository."

dotnet nuget push $_ --source $Env:PKG_URL --api-key $Env:PKG_API_KEY

if ($lastexitcode -ne 0) {

throw ("Push command errored with exit code: " + $lastexitcode)

}

}

}

This is a very basic script, which mainly revolves around running a dotnet nuget command.

The $Env:PKG_URL and $Env:PKG_API_KEY environment variables are defined as secrets within your GitHub repo settings.

The package repository URL isn’t hardcoded so that we can support multiple destinations; we only sometimes want to deploy to NuGet, depending on the circumstances.

Why are the API key and package URL environment variables not command line parameters? I suppose the answer is that this script originated as one I used locally, and the environment variables seen here are defined as system environment variables on my machine.

You may, of course, feel free to change the script to accept parameters instead — bear in mind the workflows (which we’ll be exploring in a bit) will need to be updated as well.

Now that we have the build-time assets laid out lets look at the entities that make use of them: our workflows.

Workflow Templates

GitHub Actions workflows are automated processes that run one or more jobs in response to some repository-level event.

We will need workflows added to every code repository which we desire to have automated versioning.

To simplify this process, I felt it most helpful to create several workflow templates for Bad Echo software and then apply them to each repository I wanted them to run in.

We’re now going to look at how you can create your own workflow templates, which you can apply to your repos to establish an automated versioning process.

Organizational Repository

Workflow templates need to be hosted from a special kind of GitHub repository. GitHub doesn’t give a nice, human-readable name for this repository type, so I refer to them as organizational repositories.

To create an organization repository, create a new public repo on GitHub named .github. You don’t need an organization account to create one; a personal account will do just fine.

Inside your new repo, create a directory named workflow-templates. This is where all of our workflow templates will live.

Each workflow template requires the following:

- workflow.properties.json: Configuration for the workflow, containing settings such as display name, description, etc.

- workflow.yml: The base YAML of the workflow.

- Icon.svg: An icon for our workflow, perhaps optional, but why not give it some flair?

Now that we’ve covered these fundamentals let us look at the two workflow templates we’ll be defining for use in our repositories.

Push/CI Workflow

The first workflow we’ll define will run every time a change is pushed to one of our repositories. It will compile the code, version it as a prerelease build, run tests, and publish the packages to a development/unstable package repository feed (i.e., not NuGet).

Using it will provide continuous integration to the repository it runs in.

push.yml

# On a push to source control, this compiles a release build for the repository's code, runs

# tests, and then publishes new development builds to MyGet.

name: CI on Push

on:

push:

branches:

- master

paths-ignore:

- '.github/workflows/**'

- '!.github/workflows/push.yml'

pull_request:

branches:

- master

jobs:

build:

runs-on: windows-2022

steps:

- name: Checkout

uses: actions/checkout@v3

with:

submodules: recursive

fetch-depth: 0

- name: Setup .NET

uses: actions/setup-dotnet@v3

with:

dotnet-version: 7.0.x

- name: Build

shell: pwsh

run: .\build\build.ps1 $(git rev-parse --short HEAD) $(git rev-list --count "$(git log -1 --pretty=format:"%H" version.json)..HEAD")

- name: Push to MyGet

env:

PKG_URL: https://www.myget.org/F/bad-echo/api/v3/index.json

PKG_API_KEY: ${{ secrets.MYGET_API_KEY }}

run: .\build\push.ps1

shell: pwsh

- name: Artifacts

if: always()

uses: actions/upload-artifact@v2

with:

name: artifacts

path: artifacts/**/*

Let’s take a look at what this does. The majority of it is standard boilerplate: we checkout the latest version of our code and set up a .NET environment to build our code in.

The main point of interest is the Build step, which is where we invoke our build.ps1 script. Let us break down the parameters.

The short commit ID parameter is provided with the following:

$(git rev-parse --short HEAD)This rev-parse command simply returns the short hash of HEAD, the most recent commit to the repository. This is our build metadata.

$(git rev-list --count "$(git log -1 --pretty=format:"%H" version.json)..HEAD")This rev-list command counts the number of commits there have been since version.json has been changed. This is our build number.

We can use a git rev-list -1 HEAD version.json as opposed to a git log -1 --pretty=format:"%H" version.json for the argument to --count, but what we have works all the same.

Following the Build step, we have the Push to MyGet step, which invokes our push.ps1 script. The feed URL and API key are provided as environment variables — remember to change the URL being used here so you don’t attempt to publish to my feed!

push.properties.json

{

"name": "CI on Push Workflow",

"description": "Bad Echo starter CI workflow.",

"iconName": "Icon",

"filePatterns": [

"version.json$",

"build"

]

}

This sets the name and description for our workflow; it also establishes some requirements via the filePatterns key that the repository must meet to use this workflow:

- A version.json file must be present in the repository

- A build directory must exist, which hopefully points to our build Git submodule.

Feel free to omit the filePatterns property entirely, however. My testing indicates that it has no effect, as I can apply this workflow to repositories even if they lack the version.json file, the build directory, or both.

Publish Workflow

The next workflow we’ll be defining will be responsible for creating new stable releases of our software. It will compile the code, version it as a release build, create a tag for the release, and publish the packages to an official NuGet package repository feed.

Several essential differences will exist between our publish and push/CI workflows. Most notably, the publish workflow will not run in response to some repository-level event, but rather from manual action.

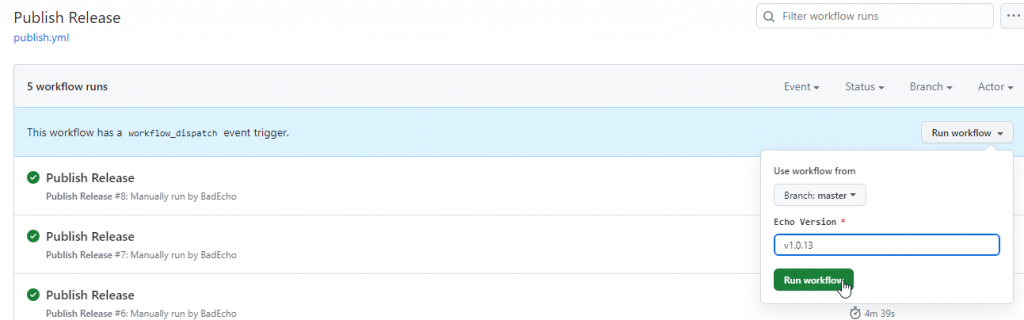

For a workflow to be run manually, GitHub requires us to configure the workflow to run on the workflow_dispatch event.

Configuring the workflow to be manually triggerable will allow us to kick it off from our repository’s GitHub Actions page while also being able to provide it with some inputs that it can use to deliver better the experience we want.

publish.yml

# Publishes a new release of Bad Echo software to official NuGet feeds.

# To configure this workflow, replace variables with their correct values in the "env" section below.

name: Publish Release

on:

workflow_dispatch:

inputs:

echo-version:

description: Echo Version

required: true

jobs:

publish:

name: Publish

runs-on: windows-2022

env:

product-Name: Bad Echo Software # Replace with the software product being published. Appears in the release tag commit message.

steps:

- name: Checkout

uses: actions/checkout@v3

with:

submodules: recursive

fetch-depth: 0

- run: git config --global user.email "chamber@badecho.com"

- run: git config --global user.name "Echo Chamber"

- name: Create Echo Version

run: |

git tag -a ${{ github.event.inputs.echo-version }} HEAD -m "${{ env.product-name }} ${{ github.event.inputs.echo-version }}"

git push origin ${{ github.event.inputs.echo-version }}

- name: Setup .NET

uses: actions/setup-dotnet@v3

with:

dotnet-version: 7.0.x

- name: Build

shell: pwsh

run: .\build\build.ps1

- name: Push to NuGet

env:

PKG_URL: https://api.nuget.org/v3/index.json

PKG_API_KEY: ${{ secrets.NUGET_API_KEY }}

run: .\build\push.ps1

shell: pwsh

- name: Artifacts

if: always()

uses: actions/upload-artifact@v2

with:

name: artifacts

path: artifacts/**/*

The workflow takes a single “version” string input from the user, which it uses as the tagname for the release tag that gets created. Additionally, a “product name” variable is defined, which we use as part of the tag’s message; however, this is meant to be configured inside the applied workflow itself and not provided when running the workflow.

This input value does not affect the actual version that the software gets packaged up as (version.json is still king in this regard). It is merely the label we’ll tag onto this specific point in our repository’s history, indicating that we’ve made a release.

I typically will provide version labels such as “v1.0.7”, “v1.0.8”, and so on.

If we look at the Build step, we’ll see our build.ps1 script is yet again being invoked; however, no arguments are provided. That’s because this is a stable release build, and only three version numbers will be present on the packaged output (the major, minor, and patch version numbers).

Following the Build step, we have the Push to NuGet step. Once again, remember to change the feed URL to your own.

Increment the Version After a Release!

It should be noted that after this workflow runs, we will have a stable release build for our code whose version is of the format Major.Minor.Patch with values sourced from version.json.

No further builds should be created using these version numbers following the release — either the major, minor, or patch number should be incremented in the version.json file before any other changes occur.

It would be neat if the workflow automated this or if a safeguard could be put in to prevent additional builds using a released version number; unfortunately, it’s not something I’ve gotten around to yet.

publish.properties.json

{

"name": "Publish Release Workflow",

"description": "Bad Echo starter publishing workflow.",

"iconName": "Icon",

"filePatterns": [

"version.json$",

"build"

]

}

This is congruous with our push/CI workflow’s settings file, save for the different name and description values. Not much is happening here; we’re simply ensuring the workflow has an identifiable name.

Once this is set up and saved to disk, please create a new commit containing these files and push it to our .github repository. We’re now ready to apply some automatic versioning to our GitHub repos.

Putting It All Together

Now that we have these workflow templates published to our organizational repo, we can easily apply them to all the code repos in which we want streamlined automatic versioning.

Configuring Secrets

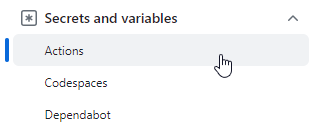

Before we add our workflows, we’ll need to set up any repository secrets required by the workflows. Unfortunately, I could not discover a way to add account-wide secrets for a personal GitHub account; however, the option to add organization-wide secrets does exist if you have an organization account.

If you’re like me and rock a personal account, then for every repository you want to add the workflows to, you’ll need to click the Settings button on the repository’s page and browse the repository’s secrets.

Once there, you can create the secrets your workflows will need. The push/CI workflow expects a MYGET_API_KEY secret, and the release workflow expects a NUGET_API_KEY secret.

With our secrets defined, we can finally apply our workflow templates to our repositories.

Adding the Workflows

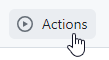

First, you’ll want to browse to your repository on github.com and then click on the Actions button.

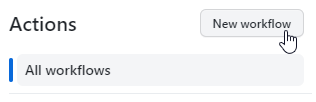

If you’ve previously added some actions to your repository, they will be listed here, and you will need to click the New workflow button.

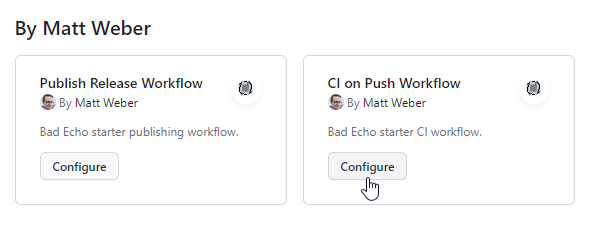

Otherwise, you’ll be presented with a page that allows you to search for a new workflow to add. Positioned near the top of this page, you should see the organizational workflows you just published.

Clicking on the Configure button will bring us to an editor with our workflow’s YAML loaded into it. You can make any changes you need here and then hit Commit changes… to finish adding the workflow to our repository.

If we do this for both of our workflows, we will have a repository with a continuous integration process running every time we push changes to it and a manually triggered process that will publish stable new releases.

Whenever we want to publish a new stable release, we simply browse to the workflow via the repository’s Action page and then click the Run workflow button.

This will compile a new release build, push it off to NuGet, and tag the current commit with the version label provided as the workflow’s input.

The processes documented in this article are what I currently use over at Bad Echo, and they do their job well. If I make any significant changes or improvements to the system, I’ll be sure to write about it.